A minimal Django testing style guide

Awesome style! Around 90% coverage! Image credit: dogpages.net

You started on a MVP. Usage is increasing. The occasional bug.

More users. Suddenly this MVP is not an MVP anymore. A core business process depends on this system your team is maintaining.

You ship a user-reported bug fix. Users report another two. Sounds familiar?

Since it was an MVP, you paid little attention to writing automated tests.

What is the current codebase’s test coverage? Wait. Do you even measure it?

This post is not about setting the gold standard on how to write tests for your Django application.

It’s about setting a simple direction as possible. And getting started. Wherever you are.

In this I also an attempt to document some of my practices around writing tests for Django projects.

Terminology

As in this Python testing style guide I followed in the past:

I do not make a distinction between unit tests and integration tests. I generally refer to these types of tests as unit tests. I do not go out of my way to isolate a single unit of code and test it without consideration of the rest of the project. I do try to isolate a single unit of behaviour.

Where do I place tests?

The greatest advantage of having tests is that they are living docs about your code. The inputs/outputs tested either work as expected or they don’t. They cannot go outdated. Unlike written docs.

So it’s important to have an obvious way to tell where the test for a piece of code resides in your codebase.

pytest’s conventions for Python test discovery are simple to agree on and follow.

Let’s say you have an Article model in app articles at articles/models.py:

class Article(models.Model):

...

Tests for this model class would go in articles/tests/test_models.py:

class ArticleTest(TestCase):

...

In a similar fashion, if we have a function in articles/utils.py:

def do_something():

...

its tests would go in articles/tests/test_utils.py:

class DoSomethingTest(TestCase):

def test_do_something(self):

...

Why create a DoSomethingTest extending a TestCase? Rather than a standalone test function?

The default way to write tests in Django is using Python’s built-in unittest module. This defines tests using a class-based approach.

django.test.TestCase is in fact a subclass of unittest.TestCase that runs each test inside a transaction to provide database-oriented goodies. Such as transaction isolation. Django’s writing and running tests docs provide more details on this.

Naming test classes

As in the case of “placing” a test in your codebase, a TestCase‘s name should be indicative of the class (or standalone function) being tested. So for classes:

class Article(models.Model):

...

class ArticleTest(TestCase):

...

and for functions:

def do_something():

...

class DoSomethingTest(TestCase):

def test_do_something(self):

...

Naming test functions

As mentioned above, your individual tests should test for a single unit of behaviour. In the below case, behaviour depends on the data (state) the logic depends on:

class Article(models.Model):

title = models.CharField(max_length=32)

slug = models.CharField(max_length=32, unique=True)

content = models.TextField()

def __str__(self):

return self.title

def get_short_title(self):

if len(self.title) > 15:

return self.title[: (15 - 3)] + "..."

return self.title

So we have to test get_short_title based on the title‘s length. The more “explicit” the name the better. Have test function names that reflect the state relevant for the expected result:

class ArticleTest(TestCase):

def test_get_short_title_title_is_longer_than_15_characters_yes(self):

...

def test_get_short_title_title_is_longer_than_15_characters_no(self):

...

Notice how the whole class name to function name structure tells us:

- name of class

Article - name of function

get_short_title - state (input) determining behaviour (output) per test

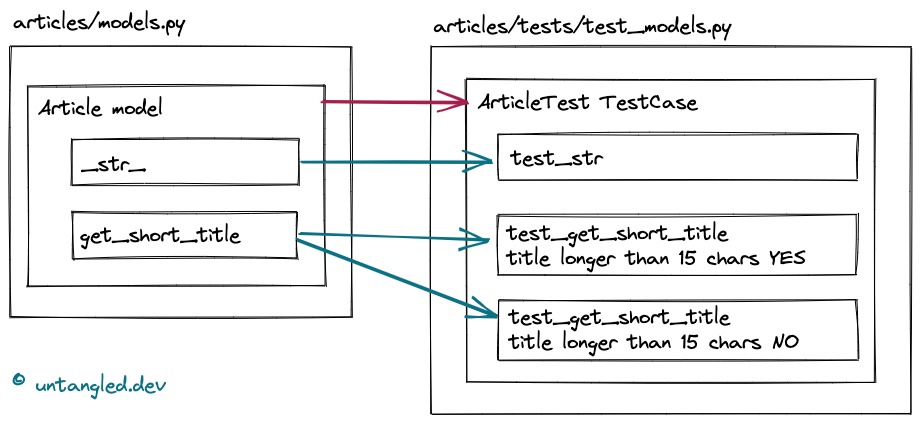

This also makes obvious what should be changed in case you move/rename classes/functions. Drawn out it looks like this:

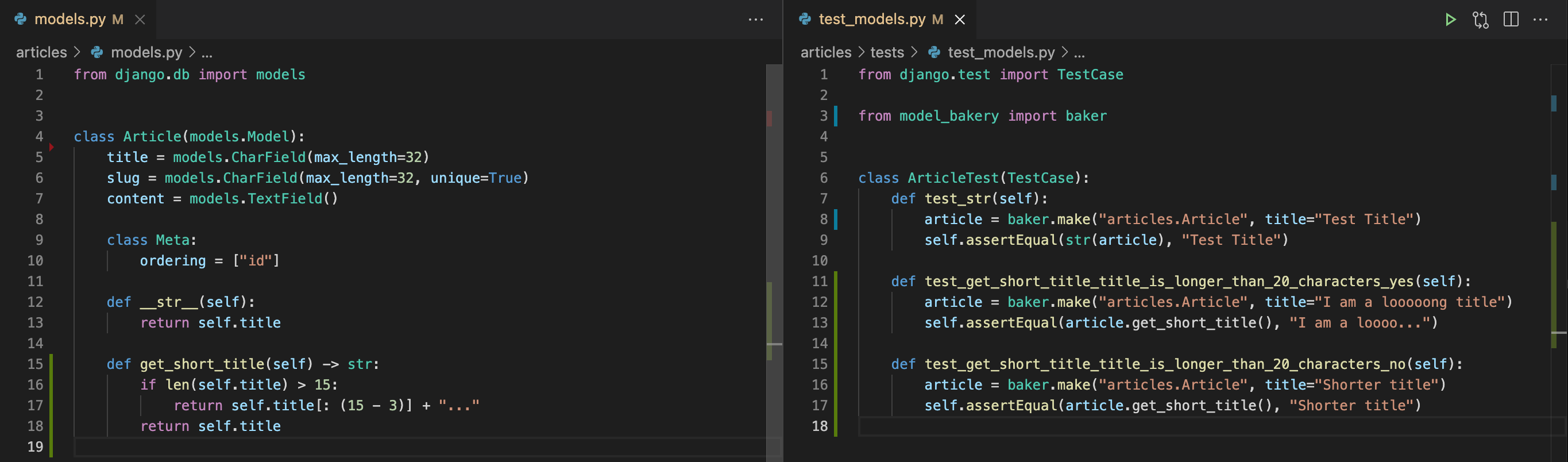

Below is a screenshot of my setup. With code on the left, and tests code on the right. No fancy screen required. I took this on a laptop screen:

I find this a game changer when reading code. Seeing a function’s inputs and expected outputs next to the function’s code speeds things up. A lot.

Creating test data

Use factory libraries to standardise test data creation.

The most popular two in Django are factory-boy and model bakery. The latter is simpler, so we use it in this article’s examples. But the former is more feature-rich.

As a guiding principle keep your factory code light. I.e. specify only the fields that need to be set for the state required by the test.

For example, when creating the article to test __str__:

article = baker.make("articles.Article", title="Test Title")

we are not passing slug or description. These would be noise for our test’s purpose.

The way we create data via factories should be about the data state expected by the test. No more, no less.

Why not just use fixtures? You may already have fixtures, with a lot of data as expected in production, lying around.

Fixtures are an overkill for tests because:

- Maintaining them is hell. For example, what if you rename a model field?

- Maintaining fixtures is usually append-only. So the fixture’s data increases over time, loading a lot of data the test doesn’t need, every time the test is run.

- Fixtures make it hard to tell what data is being loaded. It’s even harder to tell why a fixture is loading the data it is loading in the context of tests!

I find eliminating fixtures in tests is one of the most effective steps to speed up a test suite’s runtime.

Not convinced? Section 11.1 in Speed up your Django Tests delves into more detail why fixture files should just be avoided.

Testing views

The above basic examples were all about testing models. Models are the usual starting point in writing a Django app.

But a lot of logic resides in views. And the forms and/or serializers that go with them.

Unit vs integration, again

Django and DRF provide the test client and APIClient to make HTTP calls against your application programmatically.

The problem with making full HTTP calls against your application is that not only your view code wiill run. HTTP requests pass through the whole Django middleware machinery, including, for example, authentication.

So when using the test client, you would be doing more of an integration test, rather than a unit test.

The alternative would be instantiating views. And passing instances of RequestFactory, or APIRequestFactory in DRF, directly to the view functions. Same approach applies for serializer (or form) functions.

Which way do you go? Ask yourself a couple of questions:

- Is your current test suite running slow?

- Do you have a lot of added middleware a request needs to go through?

If both answers are “no” (especially if you have no tests!) then the test client is likely enough to start with.

The upside of making HTTP requests is taht you are testing the whole URL/view binding. Rather than a standalone class-based view function like get_queryset.

Let’s say a FE engineer on your team asks you a question about how a particular URL is supposed to work. Having tests with the test client allows you to be 100% sure of what the view at that URL is supposed to do. This is an example of what I meant above by living docs.

How to structure your view tests

When using the test client I find it helpful to think of my application as a “black box”:

- Assuming there is this data set up (inputs)

- What should happen if I submit this request to this URL? (action)

- Assert response returned (output)

The pattern is the one when writing tests for functions. But in this case rather than calling the specific function under test, the test makes an HTTP request.

Let’s say we want CRUD views for our Article model. This is what we’d find in articles/serializers.py:

class ArticleSerializer(serializers.ModelSerializer):

class Meta:

model = Article

fields = "__all__"

And in articles/views.py

class ArticleListCreateAPIView(generics.ListCreateAPIView):

queryset = Article.objects.all()

serializer_class = ArticleSerializer

class ArticleDetailAPIView(generics.RetrieveUpdateDestroyAPIView):

queryset = Article.objects.all()

serializer_class = ArticleSerializer

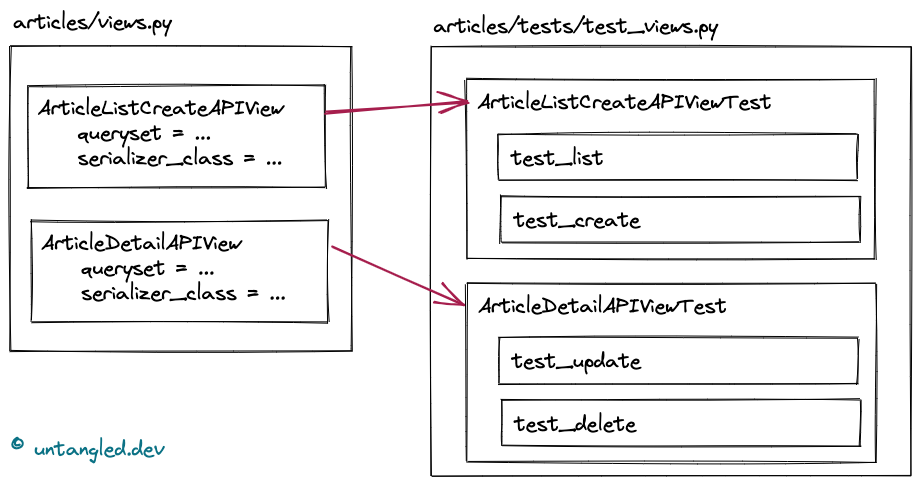

These are the tests we’d need to write to assert the API behaves as expected:

We do not need to write tests for the serializer, as it is used by the views.

Testing configuration

In many cases, as shown above, and especially when using class-based views, functionality is achieved through configuration of classes rather than coding. 1

How to test in this case? The quickest way, i.e. the minimal in terms of time, is to test using the client.

Let’s extend our view to be able to order articles by title. The easiest option would be to extend ArticleListCreateAPIViewTest.test_list to:

- create test data with different

titles to be able to assert results order - call the URL with

orderingparameter - test outcome

View code:

class ArticleListCreateAPIView(generics.ListCreateAPIView):

queryset = Article.objects.all()

serializer_class = ArticleSerializer

filter_backends = [filters.OrderingFilter]

ordering_fields = ["title"]

Test code:

class ArticleListCreateAPIViewTest(APITestCase):

def test_list(self):

article1 = baker.make("articles.Article", title="Title A")

article2 = baker.make("articles.Article", title="Title C")

article3 = baker.make("articles.Article", title="Title B")

# default ordering by id:

response = self.client.get("/api/articles/")

self.assertEqual(response.status_code, status.HTTP_200_OK)

self.assertEqual(

[article["id"] for article in response.data],

[article1.id, article2.id, article3.id],

)

# ordering by title:

response = self.client.get("/api/articles/?ordering=title")

self.assertEqual(response.status_code, status.HTTP_200_OK)

self.assertEqual(

[article["id"] for article in response.data],

[article1.id, article3.id, article2.id],

)

While testing “code owned by others” these tests ensure that the API provided works as expected.

Diving deeper

This guide is intended to be minimal. As such it misses going into some important aspects of writing tests.

Test runners other than TestCase

Django’s TestCase extends unittest‘s TestCase. And DRF’s APITestCase extends Django’s TestCase. This guide does not go into other test runners like pytest. Which take a more functional approach.

Mocking

What if your code needs to read data from an external API? Or upload a file to S3?

If the test needs to interact with external systems, it would become a “system” test. A test that depends on components “outside” the local environment where it runs.

Your automated tests should always mock such interactions.

Getting into mocking is out of this style guide’s scope. The tool Python provides is unittest.mock.

Coverage

On its own, coverage is a “dumb” measure of how many lines in your codebase are “covered by” tests. Why dumb? You can write a bunch HTTP GET requests in your tests, write zero assertions, and increase coverage a lot. That, of course, doesn’t add any value.

So do not write tests for coverage.

Rather use coverage.py to identify which lines, i.e. behaviour, your tests do not cover.

Call me naive, but I still strive for 100% coverage.

But how to get started?

In any project/team I work with, I fight for a non-negative diff coverage on every PR on CI. Diff coverage definition:

Diff coverage is the percentage of new or modified lines that are covered by tests. This provides a clear and achievable standard for code review: If you touch a line of code, that line should be covered. Code coverage is every developer’s responsibility!

Over time you’ll get to 100% coverage 🚀

Speeding tests up

In this guide we go over creating test data using factories as opposed to fixtures. But that’s just it.

In the “views” section I mentioned avoiding rendering responses to only test the behaviour “under test”.

This is scratching the surface. Speed Up Your Django Tests by Adam Johnson is the best resource on this topic.

And not only. It is in fact a great resource when starting to write tests from scratch. The below chapters, in particular, are an excellent guide:

- Chapter 10, Test Structure, on how to organise your tests’ code.

- Chatper 11, Test Data, on best practices in managing data for your tests, in the fastest way possible.

- Chapter 12, Targetted Mocking, on how to mock behaviour the right way.

References / Further Reading

- unittest module - Python’s standard library.

- Testing in Django - Django docs.

- My Python testing style guide - Thea “Stargirl” Flowers. Main inspiration for this article.

- Python Testing Style Guide - Octopus Energy. This style guide is a gold mine for any Python project. As in my article’s case, no need to treat it as dogma. But it provides insight into how a large organisation, with presumably a large codebase, handles its “style guide”. Kudos for sharing!

- Why and how I get 100% test coverage for my Django projects, and you should too. Sasha Romjin.

- Speed Up Your Django Tests - Adam Johnson. Can’t recommend this book enough!

Comments !